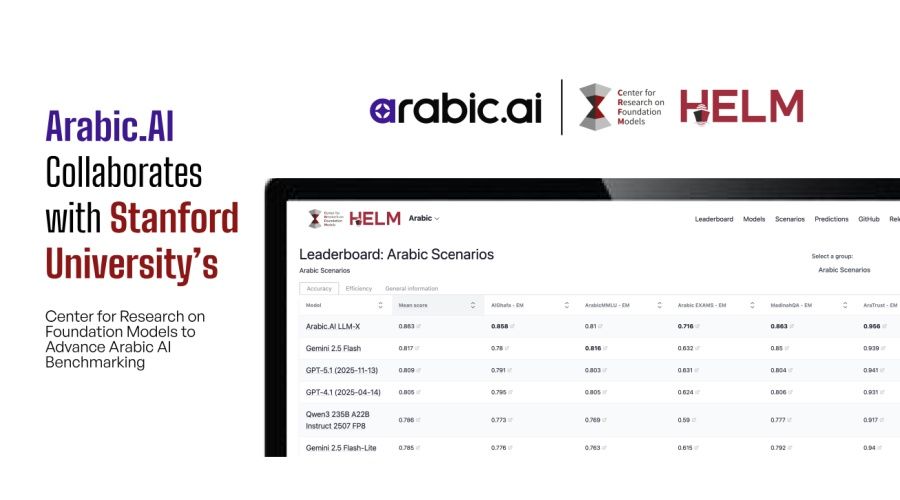

Arabic.AI partners with Stanford to launch the first holistic benchmark for Arabic AI models

Stanford, Arabic.AI Build First Arabic AI Benchmark

MENA Signal • February 6, 2026

Arabic.AI partnered with Stanford University’s Center for Research on Foundation Models. They established the first holistic benchmark for evaluating Arabic large language models. The project extends Stanford’s HELM framework to Arabic. This provides a trusted reference point for 400 million speakers. The first phase, including an Arabic leaderboard and new evaluation methods for conversational AI, is now complete. This gives enterprises a clear foundation to measure model performance against global standards.

Why MENA Founders Should Care

### capital Investors are done funding AI based on pitch decks and promises. This benchmark introduces hard metrics to the MENA ecosystem. You can no longer claim superior Arabic understanding without data. VCs will use these scores to filter deals quickly. If your model doesn't rank high on the HELM benchmark, your valuation drops. It creates a clear, objective bar for due diligence. Founders must optimize their models for this specific framework to attract capital. It shifts the power dynamic from marketing narratives to raw engineering performance.

### consolidation The market cannot support dozens of mediocre Arabic language models. A unified benchmark exposes exactly who is lagging behind and by how much. Smaller players without the resources to improve their scores will face immediate extinction pressure. Large enterprises will stop buying from vendors that lack verified performance data. This forces mergers and acquisitions across the board. Competitors will have to team up to pool data or they will die. The days of fragmented, untested Arabic solutions are over. The industry is moving toward a concentration of power among the proven few.

### opportunity This standardization clears the path for MENA startups to expand globally. You don't need to build a foundational model to win. You can build specialized applications or fine-tuning layers that rely on this rigorous benchmark. Global tech giants need accurate Arabic entry points but lack the specific local nuance. Founders can now sell verified, high-performance Arabic integrations to these massive platforms. It validates the region as a critical market for AI infrastructure. You move from a local player to a necessary global partner. The benchmark proves that Arabic AI is an enterprise-grade requirement.

The Context

Arabic is spoken by over 400 million people but has historically lagged in AI development. Most large language models prioritize English or Chinese data. This left regional enterprises relying on translated or low-quality native models. Arabic.AI launched its LLM-X and LLM-S models to address this gap. This collaboration with Stanford shifts the ecosystem from proprietary, opaque testing to open, academic rigor. It provides the technical infrastructure needed to modernize the region's digital economy. Founders previously operated in the dark; now the lights are on. This ensures Arabic technology receives the same rigor as English or Chinese models.

🌶️ Spicy Take

Most Arabic LLM startups are about to become obsolete features, not companies. If you aren't benchmarking against Stanford, you're just building a toy.

What's Next

Watch for major VCs to require HELM scores in term sheets. Expect a wave of shutdowns among low-scoring competitors within six months.

Written for founders building in the Middle East and North Africa